AI Observability Agents as "Platform Experts"

As AI agents flood the enterprise software landscape, companies must integrate their existing data hubs. In the data analytics world, this can include platforms like Snowflake. In CRM / front-office land, platforms like Salesforce. In Observability, names like Datadog and Splunk. As these platforms integrate their own AI agents, there is a parallel movement that relies on API integrations (and now MCP). Philosophically, we can think of the latter agents as having to “onboard” in their own way like new employees would. This path led us to build our very own “Datadog expert” that can work alongside the internal AI agent (“Bits”) within the platform.

It starts with knowledge gathering

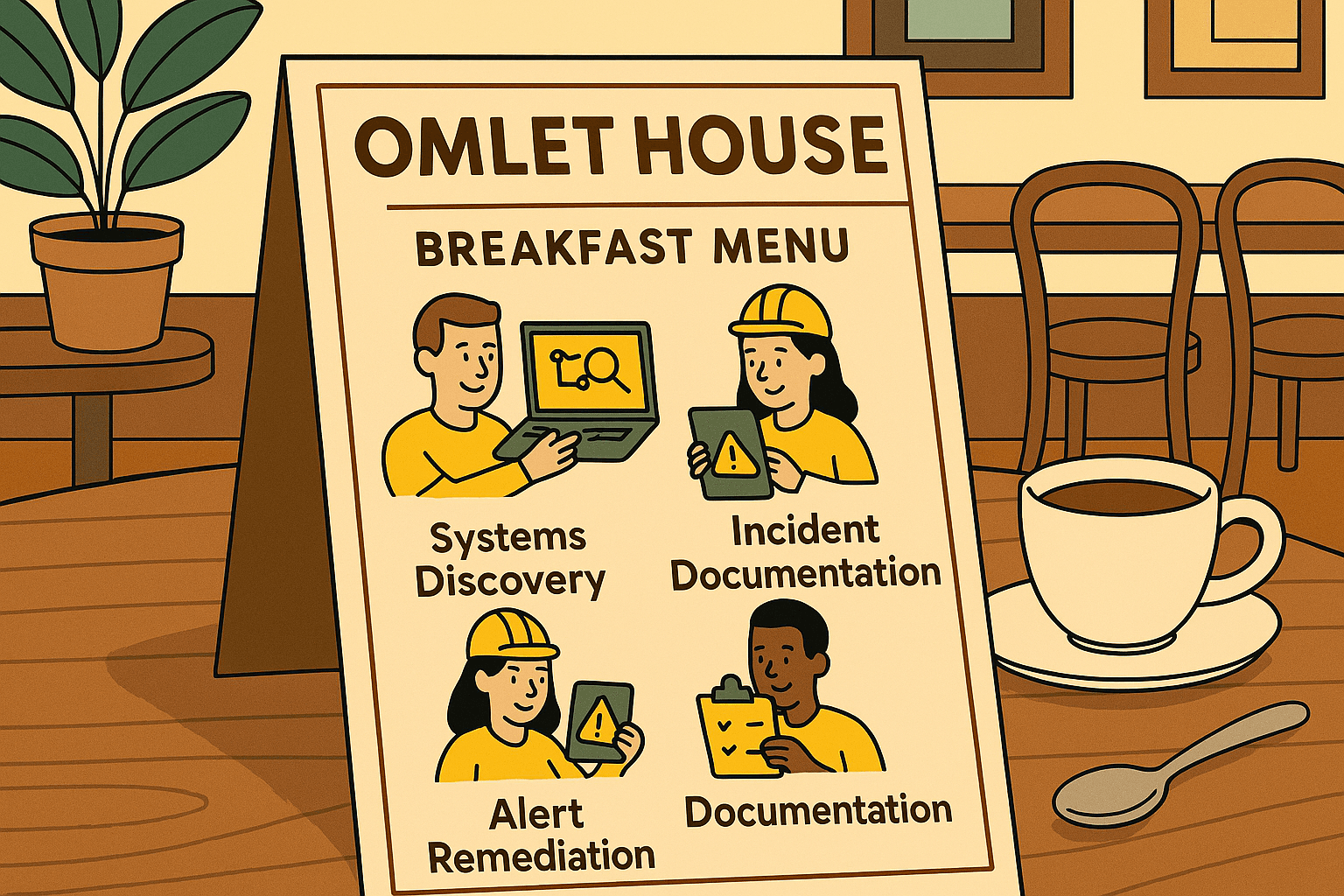

The first thing a user typically does is learn about the platform's dynamics, data structures, and goals by looking for relevant documentation. This includes query languages, UI mechanics, APIs, and more. For our expert, we had our agent thoroughly explore platform documentation and indexed knowledge. Our agent then organizes this information into clear markdown and creates visual diagrams for future reference (as shown below).

With a grounded knowledge base, our agent can effectively explore and play around with platform capabilities.

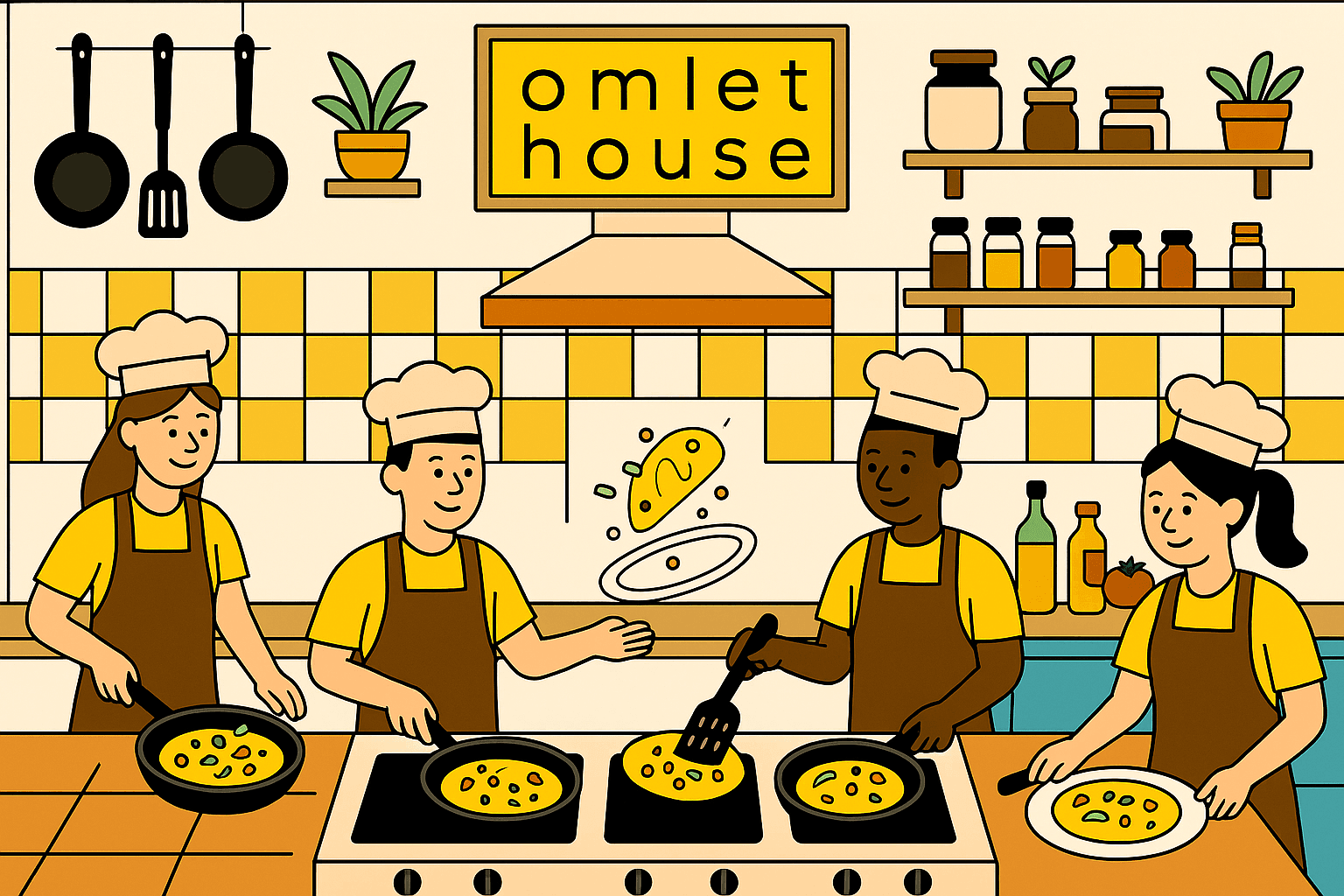

It progresses to deterministic tools

Once our agent understands "how to access" a platform, using credentials or OAuth just like a user would, it can move on to creating precise tools. While MCP is a good general platform for building and distributing tools, we can also rely on OS first principles and let the agent execute code directly.

In the example above, we demonstrate one tool. Notice the importance of precision to help the agent recover from errors and its own mistakes. We can also create highly specific tools that follow a certain user structure (such as querying only this namespace or using these filters). We find particular value in these structured implementations, as they provide a strong framework around dynamic query languages.

It culminates in sophisticated processes

Finally, what if we link all these precise tools together while allowing for dynamic exploration and model analysis? This is where we can develop systematic processes to achieve a goal within the platform. This could involve a detailed investigation or a comprehensive overview of trends.

Our main goal, using targeted tools and advanced workflows, is to let observability data move beyond the limits of generic presentation and use. That's why, within our Agent OS platform, these components can be tailored to fit internal domain knowledge while also working with other Agents. Imagine one agent gathering information and skillfully querying, while another performs analysis and assesses multiple hypotheses.